Task Description

Interaction behaviors wanted

- We want to be able to use primary grasps as a basis to grasp and manipulate an articulated object, the pliers.

- We want to use the controller’s GRIP button to control grasp, and once the plier is grasped, to use TRIGGER button to animate closing and opening of the pliers.

Solution

In VirtualGrasp SDK, we packed the solution of this task in VirtualGrasp/Scenes/onboarding, as “Task 9”.

VirtualGrasp/Scenes/onboarding/VG_Onboarding.unity

Since grasps generated by VG are static hand poses on individual solid objects, in order to create in-hand manipulation on articulated objects like the pliers shown in this example, a few steps need to be done that will be explained here:

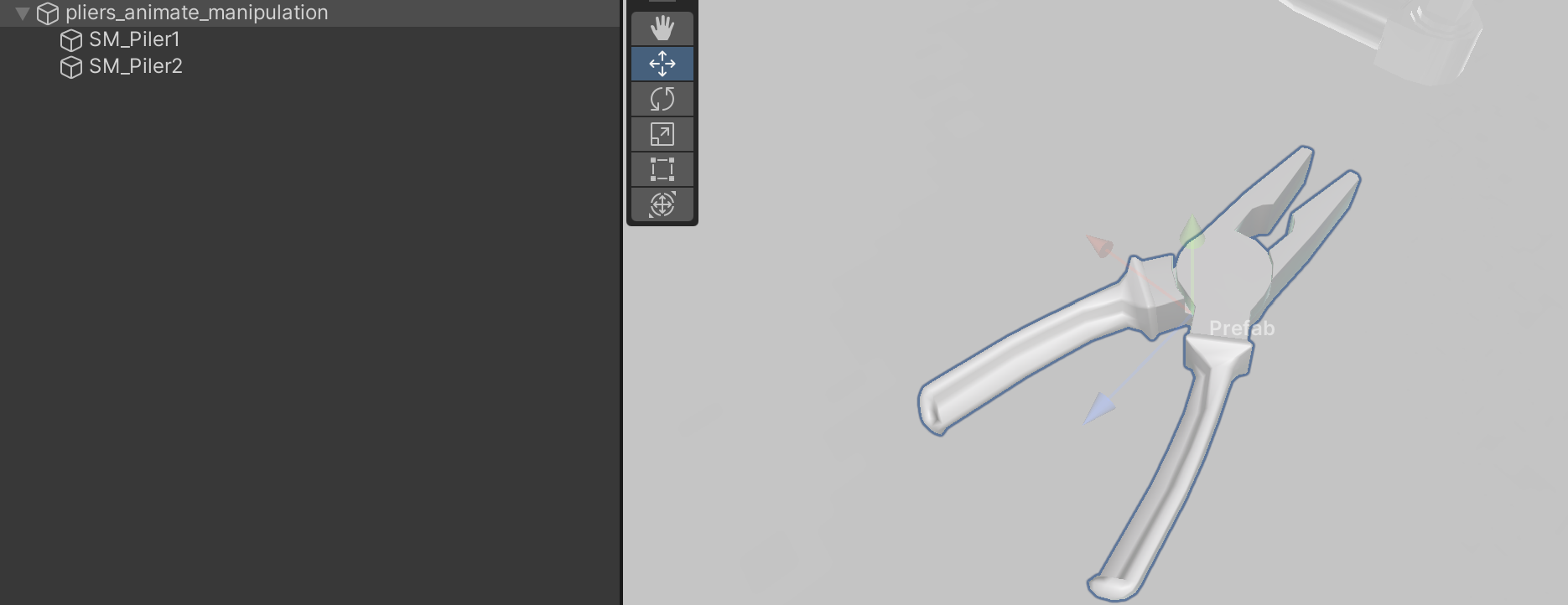

Create composite object model of articulated object for primary grasps

First we need a composite object model (see image above) that contains two separate meshes for each articulated part and a combined mesh that models the two parts together in their initial articulated pose.

The combined mesh should be marked as an interactable object by adding VG_Articulation to its transform. Then bake the object using VG_BakingClient. Once the object is baked, VG_GraspEditor is used to add primary grasps on both left and right hands.

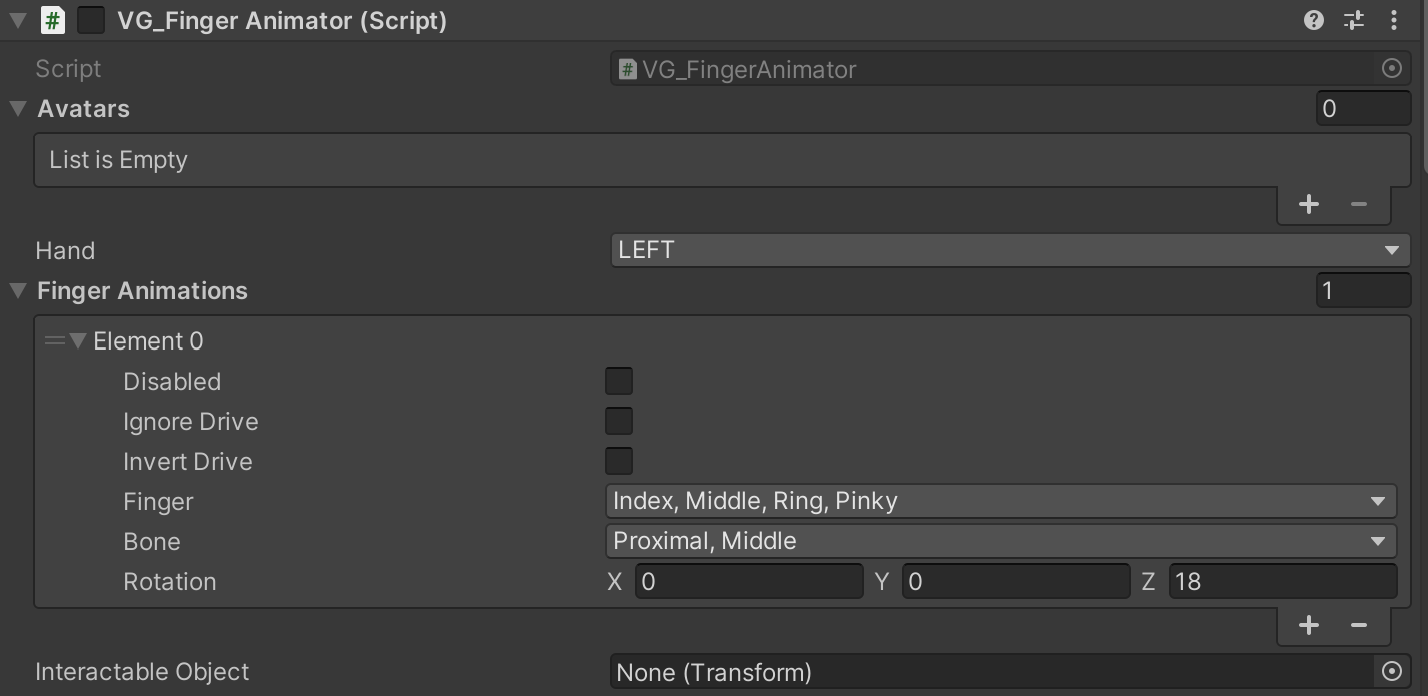

Create finger animation post grasp

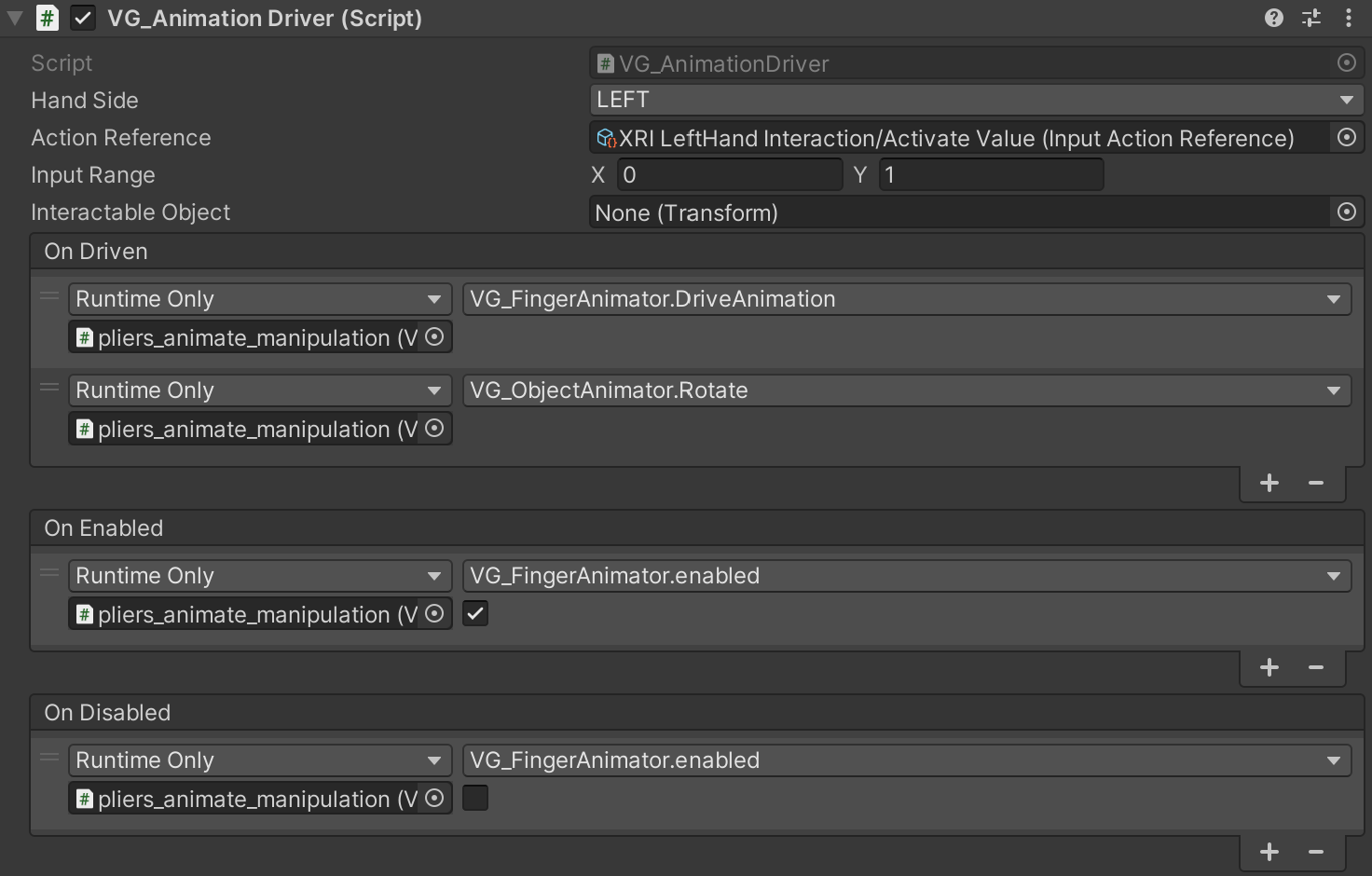

Once primary grasps are added for both hands, finger animation can be created with primary grasp finger poses as the starting point. For each hand side, a VG_FingerAnimator and a VG_AnimationDriver component is added to the object. See the images below showing the setup for the left hand:

- The VG_FingerAnimator specifies the proximal and middle bones of the four non-thumb finger(s) are to be animated, and the target local Rotation is 18 degrees around Z-axis of bone transform relative to the initial finger pose.

- And the VG_AnimationDriver specifies the Action Reference to use the input signal from controller’s TRIGGER button value, which has [0, 1] as Input Range to drive the animation. On listening to the On Driven event, VG_FingerAnimator.DriveAnimation(float) function receives the input value. The value drives the finger animation by rotating them from the initial pose to the 18 degree target rotation.

- We set the VG_FingerAnimator to be enabled or disabled in sync with the VG_AnimationDriver by listening to On Enabled and On Disabled events. This is to prevent fingers to be animated while not grasping any object, since VG_AnimationDriver is only enabled when the Interactable Object is grasped by the hand. By doing this, the initial pose would be the primary grasp finger poses.

Create object animation in sync with fingers

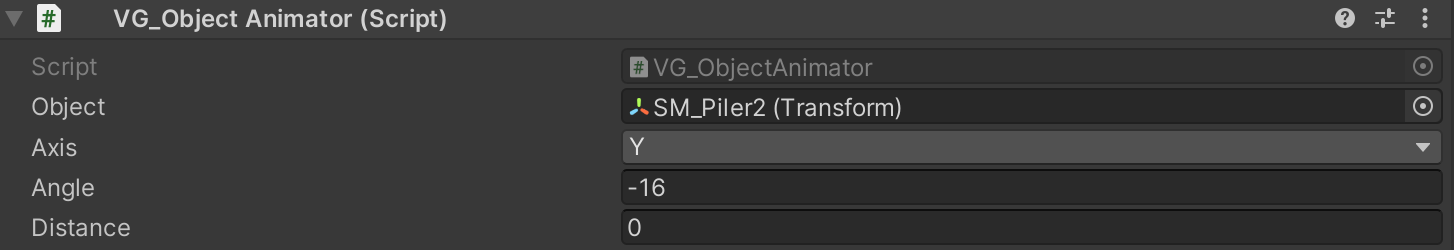

The previous step of animating fingers allows the non-thumb fingers to close to simulate the squeezing motion on the articulated plier part. Now the plier part, SM_Piler2, that is in contact with these non-thumb fingers should be animated in sync with the finger animation. To do that VG_ObjectAnimator is used:

- VG_ObjectAnimator component is added to the combined mesh transform to keep a clean setup since all components are concentrated in the same transform. Object field specifies SM_Piler2 is to be animated. The target rotation is -16 degree around Y-axis of the transform relative to its initial pose as reference pose.

- To drive the animation, similar to VG_FingerAnimator, VG_ObjectAnimator.Rotate(float) function is added to listen to VG_AnimationDriver’s On Driven event. The same input value in range [0, 1] for finger animation is used to simultaneously drive SM_Plier2 transform to rotate from its initial pose to the -16 degree rotation.