Background

VirtualGrasp is hardware-agnostic.

You can use VirtualGrasp with or without a VR headset and your scene does not need to be a VR-enabled scene.

In terms of hand control, VirtualGrasp can create natural grasp interactions with any kind of controllers (or sensors), whether it is hand-held VR controllers that gives accurate 6-dof wrist pose, finger tracking devices like Leap Motion or Oculus finger tracking feature, or or even just a computer mouse.

This is because unlike many physics-based grasp synthesis solutions in the market that require accurate finger tracking, VirtualGrasp exploits “object intelligence”. By analyzing shape and affordances of an object model in VR, we can synthesize grasp configurations on a hand with just the knowledge of where the wrist is, and without any dependence of expensive physical simulations.

How to Setup

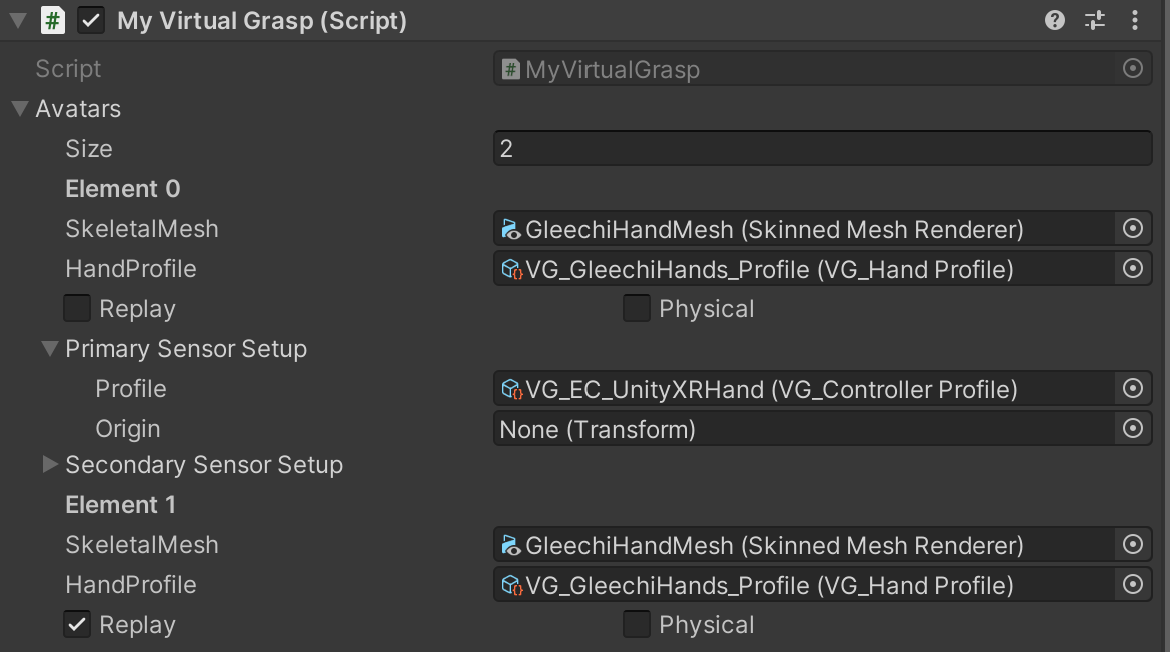

VirtualGrasp allows you to assign upto two types of sensors for an avatar. This allows developers to combine two sensors to control avatar’s hands. For example you can choose to use a data glove to control avatar’s finger pose and grasp triggers, while using an Oculus touch controller to control wrist position and orientation. Though this is not most common setup for today’s development use cases, this feature may become useful expecially for research and development of new hand controllers.

In the majority of use cases only one primary sensor is used.

Whether it is Unity or Unreal, you can assign your controller input in MyVirtualGrasp → Avatars → Primary and Secondary Sensor Setup.

Controller Profile

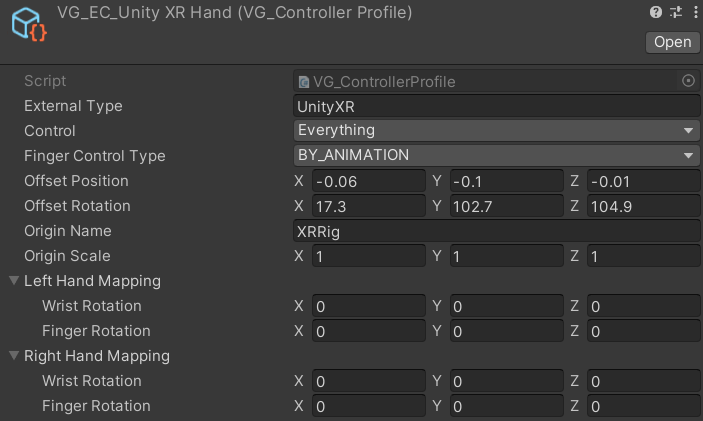

Whether it is Unity or Unreal, in Sensor Setup, Profile option allows you to select the “controller profile” for that sensor (primary or secondary). You are able to configure a number of controller-related settings and thereyby allow you to quickly switch between different controller inputs, such as UnityXR (e.g. supporting Quest), LeapMotion, Mouse, and others.

A set of ready-to-use controllers is explained in on the VG_ExternalControllerManager page.

Elements of VG_ControllerProfile are explained in this table:

| Option | Description |

|---|---|

| External Type | name of the external controller, as a string, so one can write your own external controller. Note, here we supports adding a list of controller names, separated by ‘;’, in order of priorization. E.g. “OculusHand;UnityXR” (assuming that you have enabled both controllers properly) will use Oculus hand tracking as a priority, but if no hands are tracked, it will fallback to UnityXR controllers. |

| Control | specify what this sensor element controls. If you added two sensors, then one could control wrist position, rotation and haptics, another controls fingers and grasp for example. |

| Finger Control Type | specify how sensor controls the finger motion. See Finger Control Type. |

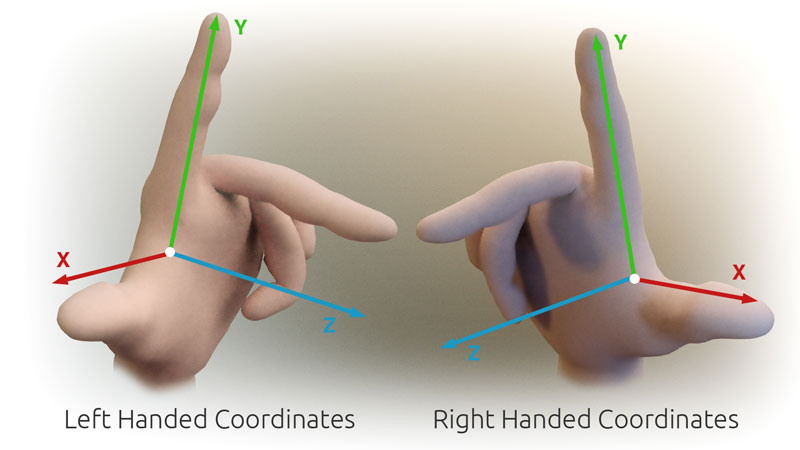

| Offset Position Offset Rotation |

when the virtual hands do not match to the position or rotation of your real hands holding the controllers, you can adjust the offset to synchronize them. Note that the hand coordinate system’s axes, XYZ, are defined like you strech out three axes with thumb, index, and middle finger (i.e. X is thumb up, Y is index forward, and Z is middle inward) of each hand. In other words, with a fully flat hand, all finger point along the positive Y axis, and your palm faces the positive Z axis. |

| Origin Name | set this to the GameObject name that should act as the origin of your controller data. For example, “XRRig” for the default Unity XR Rig (unless you renamed it). If no GameObject with this name is found (or you leave it empty), the origin will be the zero-origin. To overwrite this behavior, you can use the Origin field as described below. |

| Origin Scale | you can add a scale multiplier to the sensor data if you like. The default is (1,1,1). |

| Hand Mappings | you can find a more detailed documentation on Controller Axis Mappings. |

Source: Original by PrimalShell, Creative Commons Attribution-Share Alike 3.0 Unported license.

Origin

While each VG_ControllerProfile contains an “Origin Name” that should act as the origin of your controller data, you can overwrite the origin by selecting a different transform here.